Vendors Promised 'Single Pane of Glass' for 15 Years. Now They're Calling It an Agentic Layer.

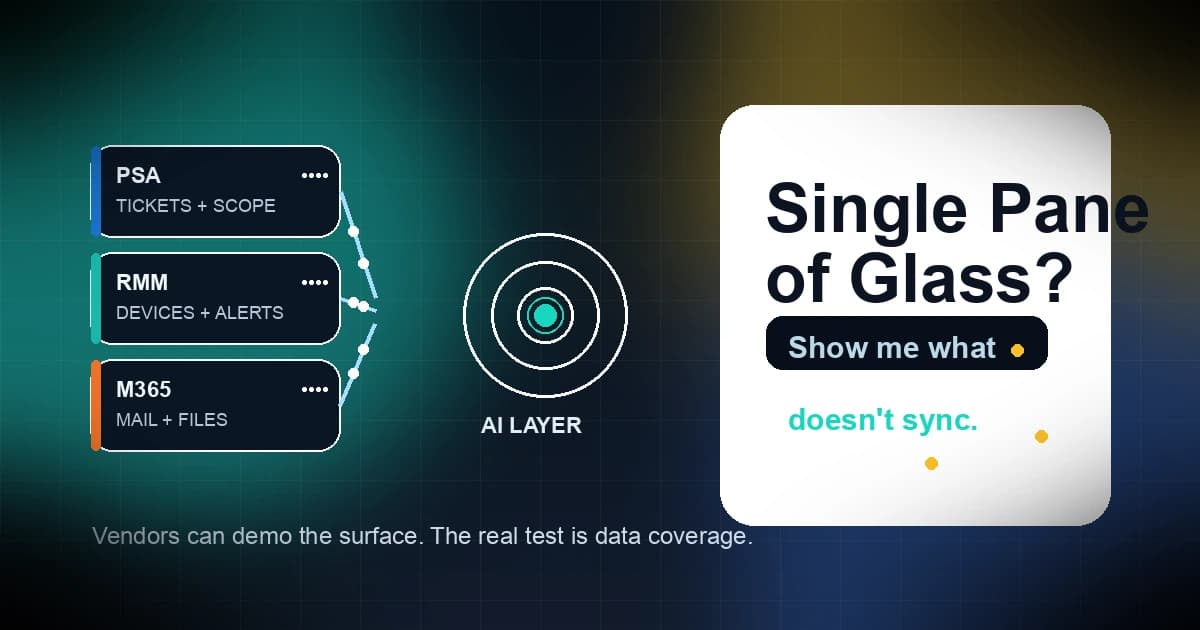

Vendors promised "single pane of glass" for 15 years. Now they're calling it an "agentic layer." Same pitch. New name. Same question nobody answered: does the data actually flow?

That line is not just a decent hook. It is the whole market problem.

Every MSP has lived through some version of this movie. First the PSA suite. Then the RMM bundle. Then the security platform. Then the "one console" pitch. The label changes. The hard part does not. Data still has to move between systems. Objects still need a source of truth. Sync still breaks. And the demo still looks clean because the demo data is clean.

ConnectWise's zofiQ announcement in April 2026 and the follow-up Channel Dive interview are the latest example. The language shifted from "single pane" to "horizontal agentic layer," but the promise is the same: put one smarter layer on top and the rest of the stack will feel unified. That is the story vendors keep telling because it sells. It is not the same as solving the underlying problem.

The old promise never fixed the plumbing

MSPs have heard this pitch in different packaging for years.

A vendor claims it will simplify operations, reduce friction, and give you one place to run the business. What it really means is that the surface gets nicer while the messy parts stay hidden. The console looks better. The pricing deck gets cleaner. The workflow still depends on a stack full of stale records, partial syncs, and quiet exceptions nobody wants to document.

That is fine if the product is just a dashboard.

It is a problem if the product claims to make decisions for you.

Why an AI layer inherits the mess below it

An AI layer does not create truth. It consumes whatever the underlying systems expose.

If the PSA has stale company names, the RMM is missing devices, the M365 tenant is misconfigured, or the connector only syncs part of the object model, the AI layer does not repair that. It wraps the same mess in a nicer sentence.

That creates a worse failure mode than the old suite pitch:

- A ticket summary sounds crisp but misses the field that actually matters.

- A scope looks complete but skips a site because the source system never saw it.

- A recommendation treats a stale asset as active.

- A workflow looks autonomous until the connector drops and the gaps appear.

- A manager trusts the output because it sounds polished, not because it is correct.

The model is not the problem. The data contract is.

That is why "agentic" is not a magic word. It is a responsibility word. The moment a system starts acting across tools, you need to know exactly what it can see, what it can change, what it cannot reach, and what happens when one connector coughs.

The one question that cuts through the demo

Show me what does not sync.

Not "what do you support." Not "what does the marketing page say." Not "what can the agent do in the happy path."

Show me what does not sync.

That is the question because every real failure starts in the gap between what the vendor says and what the vendor actually moves.

Ask for the boring details:

- Which objects sync both ways?

- Which fields are read only?

- What is the freshness window?

- What happens when a connector fails?

- Where does the audit trail live?

- What is the rollback path when the agent makes a bad move?

If the answer comes back as "mostly everything" or "it depends on the integration," stop there. That is not clarity. That is a warning label.

What honest AI integration looks like

Honest AI integration is not flashy. It is explicit.

It starts with a coverage map that names the systems, the objects, the sync direction, and the delay. It tells you where the data is authoritative and where it is just cached. It shows you the error state instead of hiding it. It explains what the agent cannot touch. It gives you a log you can audit when the workflow goes sideways.

That sounds boring because it is supposed to.

The vendor that can show you a live coverage matrix is the vendor that understands the actual problem. The vendor that keeps talking about "intelligence" but dodges the sync map is selling a story, not a system.

There are four red flags I would not ignore:

- They only talk about outcomes and never show source-of-truth rules.

- They say "near real-time" but will not define the lag.

- They cannot explain what happens when one connector is offline.

- They have no answer for the first support ticket after the agent makes a bad call.

If those gaps sound familiar, they should. That is the same pattern MSPs saw during the "single pane of glass" years. The name changed. The evasions did not.

What this means for MSPs buying AI

If you are evaluating AI for quoting, scoping, service delivery, or support automation, do not start with the model. Start with the data sources.

Look at the PSA records. Look at the RMM inventory. Look at Microsoft 365 context. Look at permissions and audit logs. Look at the places where your team already loses time because the systems do not agree.

Then ask one more question: does the tool reduce work, or does it just move the work somewhere else?

If the vendor says it drafts things faster, that is not enough. Faster wrong answers are still wrong answers.

If the tool needs you to babysit prompts, upload spreadsheets, or hand correct every output before it becomes useful, it is not removing friction. It is charging you for new friction.

This is why the broader AI story matters, too. AI can help MSPs, but only when it is grounded in live environment data instead of wishful thinking. Copilot Cowork is a good reminder that agentic features are only as safe as the permissions and data underneath them. The risk is not that the system is smart. The risk is that it is smart inside bad assumptions.

That is also why quoting and scoping matter here. If the system cannot tell you what does not sync, it cannot tell you what belongs in the scope. Missed data becomes missed labor. Missed labor becomes margin loss. Margin loss is how a nice demo turns into a bad quarter.

At Scopable, we start with real PSA, RMM, and M365 data before we generate a scope or quote. The point is not to make the layer look clever. The point is to make the output correct enough to trust.

If you want the version that starts with actual data instead of a presentation deck, join Scopable early access.

Related Reading

- 5 Ways AI Actually Helps MSPs (And Where It's Still Hype)

- Copilot Cowork: What MSPs Should Know About Microsoft's Agentic AI Play

- ConnectWise Sell Replacement Guide: Signs It's Time to Switch and Your Actual Options

- Why We Built Scopable: The Customer Experience & vCIO Platform That Actually Works